As part of this week's MWC 2024 conference, Intel is announcing that it is adding support for its vPro security technologies to select 14th Generation Core series processors (Raptor Lake-R) and their latest Meteor Lake-based Core Ultra-H and U series mobile processors. As we've seen from more launches than we care to count of Intel's desktop and mobile platforms, they typically roll out their vPro platforms sometime after they've released their full stack of processors, including overclockable K series SKUs and lower-powered T series SKUs, and this year is no exception. Altogether, Intel is announcing vPro Essential and vPro Enterprise support for several 14th Gen Core series SKUs and Intel Core Ultra mobile SKUs.

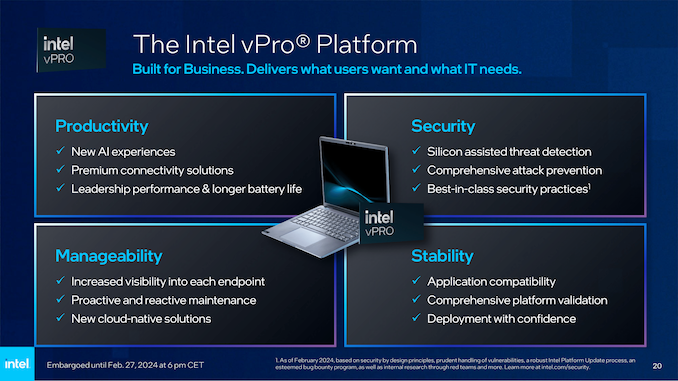

Intel's vPro security features is something we've covered previously – and on that note, Intel has a new Silicon Security Engine giving the chips the ability to authentical the systems firmware. Intel also states that Intel Threat Detection within vPro has been enhanced and adds an additional layer for the NPU, with an xPU model (CPU/GPU/NPU) to help detect a variety of attacks, and also enables 3rd party software to fun faster. Intel claims is the only AI-based security deployment within a Windows PC to date. Both the total Enterprise securities and the cut-down Essentials vPro hardware-level security to select 14th Gen Core series processors, as well as their latest mobile-focused Meteor Lake processors with Arc graphics launched last year.

Intel 14th Gen vPro: Raptor Lake-R Gets Secured

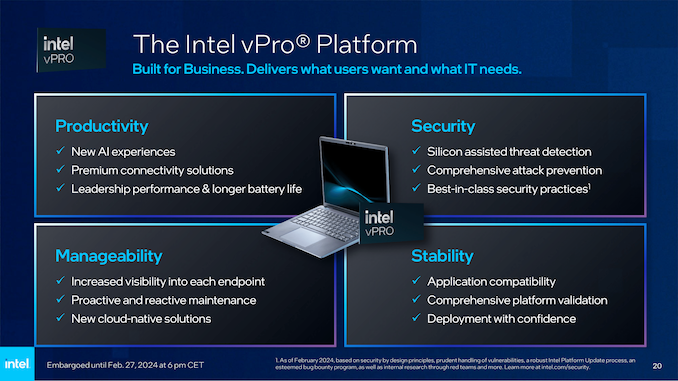

As we've seen over the last few years with a global shift towards remote work due to the Coronavirus pandemic, the need for up-to-date security in small and larger enterprises is just as critical as it has ever been. Remote and employees in offices alike must have access to the latest software and hardware frameworks to ensure the security of vital data, and that's where Intel vPro comes in.

To quickly recap the current state of affairs, let's take a look at the two levels of Intel vPro securities available, vPro Essentials and vPro Enterprise, and how they differ.

Intel's vPro Essentials was first launched back in 2022 and is a subset of Intel's complete vPro package, which is now commonly known as vPro Enterprise. The Intel vPro Essentials security package is essentially (as per the name) tailored and designed for small businesses, providing a solid foundation in security without penalizing performance. It integrates hardware-enhanced security features, ensuring hardware-level protection against emerging threats from right from its installation. It also utilizes real-time intelligence for workload optimization and Intel's Thread Detection Technology. It adds an additional layer below the operating system that uses AI-based threat detection to mitigate OS-level threats and attacks.

Pivoting to Intel vPro Enterprise security features, this is designed for SMEs to meet the high demands of large-scale business environments. It offers advanced security features and remote management capabilities, which are crucial for businesses operating with sensitive data and requiring high levels of cybersecurity. Additionally, the platform provides enhanced performance and reliability, making it suitable for intensive workloads and multitasking in a professional setting. Integrating these features from the vPro Enterprise platform ensures that large enterprises can maintain high productivity levels while ensuring data security and efficient IT management with the latest generations of processors, such as the Intel Core 14th Gen family.

Much like we saw when Intel announced their vPro for the 13th Gen Core series, it's worth noting that both the 14th and 13th Gen Core series are based on the same Raptor Lake architecture and, as such, are identical in every aspect bar base and turbo core frequencies.

Intel 14th Gen Core with vPro for Desktop

(Raptor Lake-R) |

| AnandTech |

Cores

P+E/T |

P-Core

Base/Turbo

(MHz) |

E-Core

Base/Turbo

(MHz) |

L3 Cache

(MB) |

Base

W |

Turbo

W |

vPRO

Support

(Ent/Ess) |

Price

($) |

| i9-14900K |

8+16/32 |

3200 / 6000 |

2400 / 4400 |

36 |

125 |

253 |

Enterprise |

$589 |

| i9-14900 |

8+16/32 |

2000 / 5600 |

1500 / 4300 |

36 |

65 |

219 |

Both |

$549 |

| i9-14900T |

8+16/32 |

1100 / 5500 |

800 / 4000 |

36 |

35 |

106 |

Both |

$549 |

| |

| i7-14700K |

8+12/28 |

3400 / 5600 |

2500 / 4300 |

33 |

125 |

253 |

Enterprise |

$409 |

| i7-14700 |

8+12/28 |

2100 / 5400 |

1500 / 4200 |

33 |

65 |

219 |

Both |

$384 |

| i7-14700T |

8+12/28 |

1300 / 5000 |

900 / 3700 |

33 |

35 |

106 |

Both |

$384 |

| |

| i5-14600K |

6+8/20 |

3500 / 5300 |

2600 / 4000 |

24 |

125 |

181 |

Enterprise |

$319 |

| i5-14600 |

6+8/20 |

2700 / 5200 |

2000 / 3900 |

24 |

65 |

154 |

Both |

$255 |

| i5-14500 |

6+8/20 |

2600 / 5000 |

1900 / 3700 |

24 |

65 |

154 |

Both |

$232 |

| i5-14600T |

6+8/20 |

1800 / 5100 |

1200 / 3600 |

24 |

35 |

92 |

Both |

$255 |

| i5-14500T |

6+8/20 |

1700 / 4800 |

1200 / 3400 |

24 |

35 |

92 |

Both |

$232 |

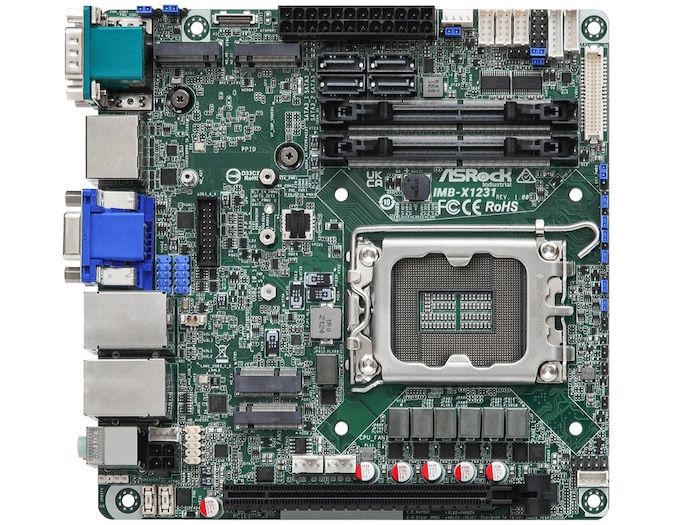

While Intel isn't technically launching any new chip SKUs (either desktop or mobile) with vPro support, the vPro desktop platform features are enabled through the use of specific motherboard chipsets, with both Q670 and W680 chipsets offering sole support for vPro on 14th Gen. Unless users are using either a Q670 or W680 motherboard with the specific chips listed above. vPro Essentials or Enterprise will not be enabled or work with each processor unless installed into a motherboard from one of these chipsets.

As with the previous 13th Gen Core series family (Raptor Lake), the 14th Gen, which is a direct refresh of these, follows a similar pattern. Specific SKUs from the 14th Gen family include support only for the full-fledged vPro Enterprise, including the Core i5-14600K, the Core i7-14700K, and the flagship Core i9-14900K. Intel's vPro Enterprise security features are supported on both Q670 and W680 motherboards, giving users more choice in which board they opt for.

The rest of the above Intel 14th Gen Core series stack, including the non-monikered chips, e.g., the Core i5-14600, as well as the T series, which are optimized for efficient workloads with a lower TDP than the rest of the stack, all support both vPro Enterprise and vPro Essentials. This includes two processors from the Core i9 family, including the Core i9-14900 and Core i9-14900T, two from the i7 series, the Core i7-14700 and Core i7-14700T, and four from the i5 series, the Core i5-14600, Core i5-14500, the Core i5-14600T and the COre i5-14500T.

The ASRock Industrial IMB-X1231 W680 mini-ITX motherboard supports vPro Enterprise and Essentials

For the processors mentioned above (non-K), different levels of vPro support are offered depending on the motherboard chipset. If a user wishes to use a Q670 motherboard, then users can specifically opt to use Intel's cut-down vPro Essentials security features. Intel states that users with a Q670 or W680 can use the full vPro Enterprise security features, including the Core i9-14900K, the Core i7-14700K, and the Core i5-14600K. Outside of this, none of the 14th Gen SKUs with the KF (unlocked with no iGPU) and F (no iGPU) monikers are listed with support for vPro.

Intel Meteor Lake with vPro: Core Ultra H and U Series get Varied vPro Support

Further to the Intel 14th Gen Core series for desktops, Intel has also enabled vPro support for their latest Meteor Lake-based Core Ultra H and U series mobile processors. Unlike the desktop platform for vPro, things are a little different in the mobile space, as Intel offers vPro on their mobile SKUs, either with vPro Enterprise or vPro Essentials, not both.

Intel Core Ultra H and U-Series Processors with vPro

(Meteor Lake) |

| AnandTech |

Cores

(P+E+LP/T) |

P-Core Turbo

Freq |

E-Core Turbo

Freq |

GPU |

GPU Freq |

L3 Cache

(MB) |

vPro Support

(Ent/Ess) |

Base TDP |

Turbo TDP |

| Ultra 9 |

|

| Core Ultra 9 185H |

6+8+2/22 |

5100 |

3800 |

Arc Xe (8) |

2350 |

24 |

Enterprise |

45 W |

115 W |

| Ultra 7 |

|

| Core Ultra 7 165H |

6+8+2/22 |

5000 |

3800 |

Arc Xe (8) |

2300 |

24 |

Enterprise |

28 W |

64/115 W |

| Core Ultra 7 155H |

6+8+2/22 |

4800 |

3800 |

Arc Xe (8) |

2250 |

24 |

Essentials |

28 W |

64/115 W |

| Core Ultra 7 165U |

2+8+2/14 |

4900 |

3800 |

Arc Xe (4) |

2000 |

12 |

Enterprise |

15 W |

57 W |

| Core Ultra 7 164U |

2+8+2/14 |

4800 |

3800 |

Arc Xe (4) |

1800 |

12 |

Enterprise |

9 W |

30 W |

| Core Ultra 7 155U |

2+8+2/14 |

4800 |

3800 |

Arc Xe (4) |

1950 |

12 |

Essentials |

15 W |

57 W |

| Ultra 5 |

|

| Core Ultra 5 135H |

4+8+2/18 |

4600 |

3600 |

Arc Xe

(7) |

2200 |

18 |

Enterprise |

28 W |

64/115 W |

| Core Ultra 5 125H |

4+8+2/18 |

4500 |

3600 |

Arc Xe (7) |

2200 |

18 |

Essentials |

28 W |

64/115 W |

| Core Ultra 5 135U |

2+8+2/14 |

4400 |

3600 |

Arc Xe (4) |

1900 |

12 |

Enterprise |

15 W |

57 W |

| Core Ultra 5 134U |

2+8+2/14 |

4400 |

3800 |

Arc Xe (4) |

1750 |

12 |

Enterprise |

9 W |

30 W |

| Core Ultra 5 125U |

2+8+2/14 |

4300 |

3600 |

Arc Xe (4) |

1850 |

12 |

Essentials |

15 W |

57 W |

The above table highlights not just the specifications of each Core Ultra 9, 7, and 5 SKU but also denotes which model gets what level of vPro support. Starting with the Core Ultra 9 185H processor, the current mobile flagship chip on Meteor Lake, this chip supports vPro Enterprise. Along with the other top-tier SKU from each of the Core Ultra 9, 7, and 5 families, including the Core Ultra 7 165H and the Core Ultra 135H, other chips with vPro Enterprise support include the Core Ultra 7 165U and Core Ultra 7 164U, as well as the Core Ultra 5 135U and Core Ultra 5 134U.

Intel's other Meteor Lake chips, including the Core Ultra 7 155H, the Core Ultra 7 155U, the Core Ultra 5 125H, and the Core Ultra 5 125U, only come with support Intel's vPro Essentials features and not with support for Enterprise This presents a slight 'dropping of the ball' from Intel on this, which we highlighted in our Intel 13th Gen Core gets vPro piece last year.

Intel vPro Support Announcement With No New Hardware, Why Announce Later?

It is worth noting that Intel's announcement of adding vPro support to their first launch of Meteor Lake Core Ultra SKUs isn't entirely new; Intel did highlight that Meteor Lake would support vPro last year within their Series 1 Product Brief dated 12/20/2023. Intel's formal announcement of vPro support for Meteor Lake is more about which SKU has which level of support, and we feel this could pose problems to users who have already purchased Core Ultra series notebooks for business and enterprise use. Multiple outlets, including Newegg and directly from HP, are alluding to mentioning vPro whatsoever.

This could mean that a user has purchased a notebook with, say, a Core Ultra 5 125H (vPro Essentials), which would be used within an SME or by said SME as a bulk purchase but wouldn't be aware that the chip doesn't have vPro Enterprise, from which they personally and from a business standpoint could benefit from the additional securities. We reached out to Intel, and they sent us the following statement.

"Since we are launching vPro powered by Intel Core Ultra & Intel Core 14th Gen this week, prospective buyers will begin seeing the relevant system information on OEM and enterprise retail partner (eg. CDW) websites in the weeks ahead. This will include information on whether a system is equipped with vPro Enterprise or Essentials so that they can purchase the right system for their compute needs."